Thankyou @mdc!! I super appreciate that is indeed very helpful. I’m going to be working on it again today, so I’m sure I will come up with more questions! One quickie that I have right now, is for associating the summary script, the document referenced above says I go to form properties in odkbuild, and input a submission url. What format is that url? Do I just reference the summary script from the root directory?

summaryscript.js

/summaryscript.js

? Or something else entirely. Also, what is the ui.info() method doing? It looks like it has the results object passed in entirely, and then individual properties passed in one at a time.

edit

One other question…I see that the npm script sensor runs the sensor. Does that run the ‘built’ version of it, or the ‘raw’ version of it?(I see now, it runs the raw version) And is there a similar way to run the processor locally? I’m imagining run-processor-dev? None of the breakpoints I dropped in there seem to trigger, I’m guessing it has something to do with how it’s being rebuilt with webpack.

Which I suppose sort of comes down to, whats the best way for me to test the processor file as I work? It seems like the three ways I am imagining would be to send results to the oursci server, to output results via ui.info(), or to use a debugger. Thanks!

another update

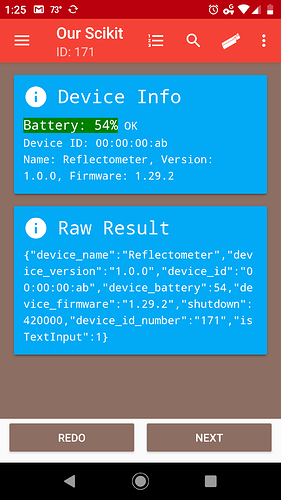

I’m playing around with UI and run-processor-dev, trying to get ui.info() to display anything at all. Here’s the current processor file without a bunch of defines and the includes.

(() => {

const result = (() => {

ui.info('Fecha', result.info_general.fecha);

if (typeof processor === 'undefined') {

return require('../data/result.json');

}

return JSON.parse(processor.getResult());

})();

Object.keys(result.error).forEach((a) => {

ui.error(`Answer '${a}' is ${result.error[a]}`, 'Return to the answer and fix it.');

});

ui.info('Fecha', 'yesyesyes';

})();

All I’m seeing on the browser after run-processor-dev is a blank brownish page with the following source.

https://pastebin.com/HNAU0HNs

Also, getting a few warnings about UI library when I run it

https://pastebin.com/2jh76ThU